“We’ll drink a cup of kindness yet…”

The Majority Report w/ Sam Seder Author Johann Hari (@johannhari101 ) joins us to discuss his new book, “Lost Connections: Uncovering the Real Causes of Depression — and the Unexpected Solutions.” Author Johann Hari (@johannhari101 ) joins us to discuss his new book, “Lost Connections: Uncovering the Real Causes of Depression — and the Unexpected Solutions.” The biological makeup of humans has not changed for many centuries, but depression, anxiety and addiction are on the rise. For this book, Hari traveled around the world and interviewed experts to identify the sources of our pain, including: Lack of control over one’s work conditions, childhood trauma, and the isolation of modern life. He traces many of these problems back to neoliberal capitalism, as well as forward to antisocial behavior as it manifests in the political sphere.

Shahid Yousuf Khan Iranian short film (just over 1 min) that won an award at the Luxor Film Festival. فارسی فلم، شارٹ فلم ایوارڈ ایران. Thursday Appointment l #LuxorAward#ShortFilm#IranianMovies

(Submitted by Ugur Yilmaz)

The Atlantic|getpocket.com

Wikimedia / donatas1205 / Billion Photos / vgeny Karandaev / The Atlantic

The history of computers is often told as a history of objects, from the abacus to the Babbage engine up through the code-breaking machines of World War II. In fact, it is better understood as a history of ideas, mainly ideas that emerged from mathematical logic, an obscure and cult-like discipline that first developed in the 19th century. Mathematical logic was pioneered by philosopher-mathematicians, most notably George Boole and Gottlob Frege, who were themselves inspired by Leibniz’s dream of a universal “concept language,” and the ancient logical system of Aristotle.

Mathematical logic was initially considered a hopelessly abstract subject with no conceivable applications. As one computer scientist commented: “If, in 1901, a talented and sympathetic outsider had been called upon to survey the sciences and name the branch which would be least fruitful in [the] century ahead, his choice might well have settled upon mathematical logic.” And yet, it would provide the foundation for a field that would have more impact on the modern world than any other.

The evolution of computer science from mathematical logic culminated in the 1930s, with two landmark papers: Claude Shannon’s “A Symbolic Analysis of Switching and Relay Circuits,” and Alan Turing’s “On Computable Numbers, With an Application to the Entscheidungsproblem.” In the history of computer science, Shannon and Turing are towering figures, but the importance of the philosophers and logicians who preceded them is frequently overlooked.

A well-known history of computer science describes Shannon’s paper as “possibly the most important, and also the most noted, master’s thesis of the century.” Shannon wrote it as an electrical engineering student at MIT. His adviser, Vannevar Bush, built a prototype computer known as the Differential Analyzer that could rapidly calculate differential equations. The device was mostly mechanical, with subsystems controlled by electrical relays, which were organized in an ad hoc manner as there was not yet a systematic theory underlying circuit design. Shannon’s thesis topic came about when Bush recommended he try to discover such a theory.

Shannon’s paper is in many ways a typical electrical-engineering paper, filled with equations and diagrams of electrical circuits. What is unusual is that the primary reference was a 90-year-old work of mathematical philosophy, George Boole’s The Laws of Thought.

Today, Boole’s name is well known to computer scientists (many programming languages have a basic data type called a Boolean), but in 1938 he was rarely read outside of philosophy departments. Shannon himself encountered Boole’s work in an undergraduate philosophy class. “It just happened that no one else was familiar with both fields at the same time,” he commented later.

Boole is often described as a mathematician, but he saw himself as a philosopher, following in the footsteps of Aristotle. The Laws of Thought begins with a description of his goals, to investigate the fundamental laws of the operation of the human mind:

The design of the following treatise is to investigate the fundamental laws of those operations of the mind by which reasoning is performed; to give expression to them in the symbolical language of a Calculus, and upon this foundation to establish the science of Logic … and, finally, to collect … some probable intimations concerning the nature and constitution of the human mind.

He then pays tribute to Aristotle, the inventor of logic, and the primary influence on his own work:

In its ancient and scholastic form, indeed, the subject of Logic stands almost exclusively associated with the great name of Aristotle. As it was presented to ancient Greece in the partly technical, partly metaphysical disquisitions of The Organon, such, with scarcely any essential change, it has continued to the present day.

Trying to improve on the logical work of Aristotle was an intellectually daring move. Aristotle’s logic, presented in his six-part book The Organon, occupied a central place in the scholarly canon for more than 2,000 years. It was widely believed that Aristotle had written almost all there was to say on the topic. The great philosopher Immanuel Kant commented that, since Aristotle, logic had been “unable to take a single step forward, and therefore seems to all appearance to be finished and complete.”

Aristotle’s central observation was that arguments were valid or not based on their logical structure, independent of the non-logical words involved. The most famous argument schema he discussed is known as the syllogism:

You can replace “Socrates” with any other object, and “mortal” with any other predicate, and the argument remains valid. The validity of the argument is determined solely by the logical structure. The logical words — “all,” “is,” are,” and “therefore” — are doing all the work.

Aristotle also defined a set of basic axioms from which he derived the rest of his logical system:

These axioms weren’t meant to describe how people actually think (that would be the realm of psychology), but how an idealized, perfectly rational person ought to think.

Aristotle’s axiomatic method influenced an even more famous book, Euclid’s Elements, which is estimated to be second only to the Bible in the number of editions printed.

A fragment of the Elements. Wikimedia Commons.

Although ostensibly about geometry, the Elements became a standard textbook for teaching rigorous deductive reasoning. (Abraham Lincoln once said that he learned sound legal argumentation from studying Euclid.) In Euclid’s system, geometric ideas were represented as spatial diagrams. Geometry continued to be practiced this way until René Descartes, in the 1630s, showed that geometry could instead be represented as formulas. His Discourse on Method was the first mathematics text in the West to popularize what is now standard algebraic notation — x, y, z for variables, a, b, c for known quantities, and so on.

Descartes’s algebra allowed mathematicians to move beyond spatial intuitions to manipulate symbols using precisely defined formal rules. This shifted the dominant mode of mathematics from diagrams to formulas, leading to, among other things, the development of calculus, invented roughly 30 years after Descartes by, independently, Isaac Newton and Gottfried Leibniz.

Boole’s goal was to do for Aristotelean logic what Descartes had done for Euclidean geometry: free it from the limits of human intuition by giving it a precise algebraic notation. To give a simple example, when Aristotle wrote:

All men are mortal.

Boole replaced the words “men” and “mortal” with variables, and the logical words “all” and “are” with arithmetical operators:

x = x * y

Which could be interpreted as “Everything in the set x is also in the set y.”

The Laws of Thought created a new scholarly field—mathematical logic—which in the following years became one of the most active areas of research for mathematicians and philosophers. Bertrand Russell called the Laws of Thought “the work in which pure mathematics was discovered.”

Shannon’s insight was that Boole’s system could be mapped directly onto electrical circuits. At the time, electrical circuits had no systematic theory governing their design. Shannon realized that the right theory would be “exactly analogous to the calculus of propositions used in the symbolic study of logic.”

He showed the correspondence between electrical circuits and Boolean operations in a simple chart:

Shannon’s mapping from electrical circuits to symbolic logic. University of Virginia.

This correspondence allowed computer scientists to import decades of work in logic and mathematics by Boole and subsequent logicians. In the second half of his paper, Shannon showed how Boolean logic could be used to create a circuit for adding two binary digits.

Shannon’s adder circuit. University of Virginia.

By stringing these adder circuits together, arbitrarily complex arithmetical operations could be constructed. These circuits would become the basic building blocks of what are now known as arithmetical logic units, a key component in modern computers.

Another way to characterize Shannon’s achievement is that he was first to distinguish between the logical and the physical layer of computers. (This distinction has become so fundamental to computer science that it might seem surprising to modern readers how insightful it was at the time—a reminder of the adage that “the philosophy of one century is the common sense of the next.”)

Since Shannon’s paper, a vast amount of progress has been made on the physical layer of computers, including the invention of the transistor in 1947 by William Shockley and his colleagues at Bell Labs. Transistors are dramatically improved versions of Shannon’s electrical relays — the best known way to physically encode Boolean operations. Over the next 70 years, the semiconductor industry packed more and more transistors into smaller spaces. A 2016 iPhone has about 3.3 billion transistors, each one a “relay switch” like those pictured in Shannon’s diagrams.

While Shannon showed how to map logic onto the physical world, Turing showed how to design computers in the language of mathematical logic. When Turing wrote his paper, in 1936, he was trying to solve “the decision problem,” first identified by the mathematician David Hilbert, who asked whether there was an algorithm that could determine whether an arbitrary mathematical statement is true or false. In contrast to Shannon’s paper, Turing’s paper is highly technical. Its primary historical significance lies not in its answer to the decision problem, but in the template for computer design it provided along the way.

Turing was working in a tradition stretching back to Gottfried Leibniz, the philosophical giant who developed calculus independently of Newton. Among Leibniz’s many contributions to modern thought, one of the most intriguing was the idea of a new language he called the “universal characteristic” that, he imagined, could represent all possible mathematical and scientific knowledge. Inspired in part by the 13th-century religious philosopher Ramon Llull, Leibniz postulated that the language would be ideographic like Egyptian hieroglyphics, except characters would correspond to “atomic” concepts of math and science. He argued this language would give humankind an “instrument” that could enhance human reason “to a far greater extent than optical instruments” like the microscope and telescope.

He also imagined a machine that could process the language, which he called the calculus ratiocinator.

If controversies were to arise, there would be no more need of disputation between two philosophers than between two accountants. For it would suffice to take their pencils in their hands, and say to each other: Calculemus—Let us calculate.

Leibniz didn’t get the opportunity to develop his universal language or the corresponding machine (although he did invent a relatively simple calculating machine, the stepped reckoner). The first credible attempt to realize Leibniz’s dream came in 1879, when the German philosopher Gottlob Frege published his landmark logic treatise Begriffsschrift. Inspired by Boole’s attempt to improve Aristotle’s logic, Frege developed a much more advanced logical system. The logic taught in philosophy and computer-science classes today—first-order or predicate logic—is only a slight modification of Frege’s system.

Frege is generally considered one of the most important philosophers of the 19th century. Among other things, he is credited with catalyzing what noted philosopher Richard Rorty called the “linguistic turn” in philosophy. As Enlightenment philosophy was obsessed with questions of knowledge, philosophy after Frege became obsessed with questions of language. His disciples included two of the most important philosophers of the 20th century—Bertrand Russell and Ludwig Wittgenstein.

The major innovation of Frege’s logic is that it much more accurately represented the logical structure of ordinary language. Among other things, Frege was the first to use quantifiers (“for every,” “there exists”) and to separate objects from predicates. He was also the first to develop what today are fundamental concepts in computer science like recursive functions and variables with scope and binding.

Frege’s formal language — what he called his “concept-script” — is made up of meaningless symbols that are manipulated by well-defined rules. The language is only given meaning by an interpretation, which is specified separately (this distinction would later come to be called syntax versus semantics). This turned logic into what the eminent computer scientists Allan Newell and Herbert Simon called “the symbol game,” “played with meaningless tokens according to certain purely syntactic rules.”

All meaning had been purged. One had a mechanical system about which various things could be proved. Thus progress was first made by walking away from all that seemed relevant to meaning and human symbols.

As Bertrand Russell famously quipped: “Mathematics may be defined as the subject in which we never know what we are talking about, nor whether what we are saying is true.”

An unexpected consequence of Frege’s work was the discovery of weaknesses in the foundations of mathematics. For example, Euclid’s Elements — considered the gold standard of logical rigor for thousands of years — turned out to be full of logical mistakes. Because Euclid used ordinary words like “line” and “point,” he — and centuries of readers — deceived themselves into making assumptions about sentences that contained those words. To give one relatively simple example, in ordinary usage, the word “line” implies that if you are given three distinct points on a line, one point must be between the other two. But when you define “line” using formal logic, it turns out “between-ness” also needs to be defined—something Euclid overlooked. Formal logic makes gaps like this easy to spot.

This realization created a crisis in the foundation of mathematics. If the Elements — the bible of mathematics — contained logical mistakes, what other fields of mathematics did too? What about sciences like physics that were built on top of mathematics?

The good news is that the same logical methods used to uncover these errors could also be used to correct them. Mathematicians started rebuilding the foundations of mathematics from the bottom up. In 1889, Giuseppe Peano developed axioms for arithmetic, and in 1899, David Hilbert did the same for geometry. Hilbert also outlined a program to formalize the remainder of mathematics, with specific requirements that any such attempt should satisfy, including:

Rebuilding mathematics in a way that satisfied these requirements became known as Hilbert’s program. Up through the 1930s, this was the focus of a core group of logicians including Hilbert, Russell, Kurt Gödel, John Von Neumann, Alonzo Church, and, of course, Alan Turing.

Hilbert’s program proceeded on at least two fronts. On the first front, logicians created logical systems that tried to prove Hilbert’s requirements either satisfiable or not.

On the second front, mathematicians used logical concepts to rebuild classical mathematics. For example, Peano’s system for arithmetic starts with a simple function called the successor function which increases any number by one. He uses the successor function to recursively define addition, uses addition to recursively define multiplication, and so on, until all the operations of number theory are defined. He then uses those definitions, along with formal logic, to prove theorems about arithmetic.

The historian Thomas Kuhn once observed that “in science, novelty emerges only with difficulty.” Logic in the era of Hilbert’s program was a tumultuous process of creation and destruction. One logician would build up an elaborate system and another would tear it down.

The favored tool of destruction was the construction of self-referential, paradoxical statements that showed the axioms from which they were derived to be inconsistent. A simple form of this “liar’s paradox” is the sentence:

This sentence is false.

If it is true then it is false, and if it is false then it is true, leading to an endless loop of self-contradiction.

Russell made the first notable use of the liar’s paradox in mathematical logic. He showed that Frege’s system allowed self-contradicting sets to be derived:

Let R be the set of all sets that are not members of themselves. If R is not a member of itself, then its definition dictates that it must contain itself, and if it contains itself, then it contradicts its own definition as the set of all sets that are not members of themselves.

This became known as Russell’s paradox and was seen as a serious flaw in Frege’s achievement. (Frege himself was shocked by this discovery. He replied to Russell: “Your discovery of the contradiction caused me the greatest surprise and, I would almost say, consternation, since it has shaken the basis on which I intended to build my arithmetic.”)

Russell and his colleague Alfred North Whitehead put forth the most ambitious attempt to complete Hilbert’s program with the Principia Mathematica, published in three volumes between 1910 and 1913. The Principia’s method was so detailed that it took over 300 pages to get to the proof that 1+1=2.

Russell and Whitehead tried to resolve Frege’s paradox by introducing what they called type theory. The idea was to partition formal languages into multiple levels or types. Each level could make reference to levels below, but not to their own or higher levels. This resolved self-referential paradoxes by, in effect, banning self-reference. (This solution was not popular with logicians, but it did influence computer science — most modern computer languages have features inspired by type theory.)

Self-referential paradoxes ultimately showed that Hilbert’s program could never be successful. The first blow came in 1931, when Gödel published his now famous incompleteness theorem, which proved that any consistent logical system powerful enough to encompass arithmetic must also contain statements that are true but cannot be proven to be true. (Gödel’s incompleteness theorem is one of the few logical results that has been broadly popularized, thanks to books like Gödel, Escher, Bach and The Emperor’s New Mind).

The final blow came when Turing and Alonzo Church independently proved that no algorithm could exist that determined whether an arbitrary mathematical statement was true or false. (Church did this by inventing an entirely different system called the lambda calculus, which would later inspire computer languages like Lisp.) The answer to the decision problem was negative.

Turing’s key insight came in the first section of his famous 1936 paper, “On Computable Numbers, With an Application to the Entscheidungsproblem.” In order to rigorously formulate the decision problem (the “Entscheidungsproblem”), Turing first created a mathematical model of what it means to be a computer (today, machines that fit this model are known as “universal Turing machines”). As the logician Martin Davis describes it:

Turing knew that an algorithm is typically specified by a list of rules that a person can follow in a precise mechanical manner, like a recipe in a cookbook. He was able to show that such a person could be limited to a few extremely simple basic actions without changing the final outcome of the computation.

Then, by proving that no machine performing only those basic actions could determine whether or not a given proposed conclusion follows from given premises using Frege’s rules, he was able to conclude that no algorithm for the Entscheidungsproblem exists.

As a byproduct, he found a mathematical model of an all-purpose computing machine.

Next, Turing showed how a program could be stored inside a computer alongside the data upon which it operates. In today’s vocabulary, we’d say that he invented the “stored-program” architecture that underlies most modern computers:

Before Turing, the general supposition was that in dealing with such machines the three categories — machine, program, and data — were entirely separate entities. The machine was a physical object; today we would call it hardware. The program was the plan for doing a computation, perhaps embodied in punched cards or connections of cables in a plugboard. Finally, the data was the numerical input. Turing’s universal machine showed that the distinctness of these three categories is an illusion.

This was the first rigorous demonstration that any computing logic that could be encoded in hardware could also be encoded in software. The architecture Turing described was later dubbed the “Von Neumann architecture” — but modern historians generally agree it came from Turing, as, apparently, did Von Neumann himself.

Although, on a technical level, Hilbert’s program was a failure, the efforts along the way demonstrated that large swaths of mathematics could be constructed from logic. And after Shannon and Turing’s insights—showing the connections between electronics, logic and computing—it was now possible to export this new conceptual machinery over to computer design.

During World War II, this theoretical work was put into practice, when government labs conscripted a number of elite logicians. Von Neumann joined the atomic bomb project at Los Alamos, where he worked on computer design to support physics research. In 1945, he wrote the specification of the EDVAC—the first stored-program, logic-based computer—which is generally considered the definitive source guide for modern computer design.

Turing joined a secret unit at Bletchley Park, northwest of London, where he helped design computers that were instrumental in breaking German codes. His most enduring contribution to practical computer design was his specification of the ACE, or Automatic Computing Engine.

As the first computers to be based on Boolean logic and stored-program architectures, the ACE and the EDVAC were similar in many ways. But they also had interesting differences, some of which foreshadowed modern debates in computer design. Von Neumann’s favored designs were similar to modern CISC (“complex”) processors, baking rich functionality into hardware. Turing’s design was more like modern RISC (“reduced”) processors, minimizing hardware complexity and pushing more work to software.

Von Neumann thought computer programming would be a tedious, clerical job. Turing, by contrast, said computer programming “should be very fascinating. There need be no real danger of it ever becoming a drudge, for any processes that are quite mechanical may be turned over to the machine itself.”

Since the 1940s, computer programming has become significantly more sophisticated. One thing that hasn’t changed is that it still primarily consists of programmers specifying rules for computers to follow. In philosophical terms, we’d say that computer programming has followed in the tradition of deductive logic, the branch of logic discussed above, which deals with the manipulation of symbols according to formal rules.

In the past decade or so, programming has started to change with the growing popularity of machine learning, which involves creating frameworks for machines to learn via statistical inference. This has brought programming closer to the other main branch of logic, inductive logic, which deals with inferring rules from specific instances.

Today’s most promising machine learning techniques use neural networks, which were first invented in 1940s by Warren McCulloch and Walter Pitts, whose idea was to develop a calculus for neurons that could, like Boolean logic, be used to construct computer circuits. Neural networks remained esoteric until decades later when they were combined with statistical techniques, which allowed them to improve as they were fed more data. Recently, as computers have become increasingly adept at handling large data sets, these techniques have produced remarkable results. Programming in the future will likely mean exposing neural networks to the world and letting them learn.

This would be a fitting second act to the story of computers. Logic began as a way to understand the laws of thought. It then helped create machines that could reason according to the rules of deductive logic. Today, deductive and inductive logic are being combined to create machines that both reason and learn. What began, in Boole’s words, with an investigation “concerning the nature and constitution of the human mind,” could result in the creation of new minds—artificial minds—that might someday match or even exceed our own.

Chris Dixon is a general partner at Andreessen Horowitz.

This article was originally published on March 20, 2017, by The Atlantic, and is republished here with permission.

I wrote Figuring (public library) to explore the interplay between chance and choice, the human search for meaning in an unfeeling universe governed by equal parts precision and randomness, the bittersweet beauty of asymmetrical and half-requited loves, and our restless impulse to uncover the deepest truths of nature, even at the price of our convenient existential delusions of self-importance. (More about the book here.) These are vast, thickly interwoven themes, difficult to distill in a single sentiment, so I chose two dramatically different yet complementary epigraphs to open the book — one drawn from the trailblazing 18th-century philosopher and woman of letters Germaine de Staël’s treatise on the happiness of individuals and societies, and the other from one of our civilization’s most lucid and luminous poets laureate of the human spirit: W.H. Auden (February 21, 1907–September 29, 1973).

The Auden stanza comes from his stunning poem “The More Loving One,” originally published in his 1960 book Homage to Clio (public library) — a collection of shorter poems about history, a concept Auden defines in his own epigraph for the book:

Between those happenings that prefigure it

And those that happen in its anamnesis

Occurs the Event, but that no human wit

Can recognize until all happening ceases.

History, in other words, is not the objective chronicle of events but the subjective recognition of happenings sighted in the rearview mirror of being. (This is a question I explore throughout Figuring, in the prelude to which I wrote that history is not what happened, but what survives the shipwrecks of judgment and chance.) Auden saw history — this selective set of remembrances constructed by human intention and choice — as both counterpart and antipode to nature, in which events unfold free of intent, governed by chance and the impartial physical laws of the universe. Curiously, “The More Loving One” appears among Auden’s poems about history, but it deals with nature and the disorienting necessity of learning to love a universe insentient to our hopes and fears, unconcerned with our individual fates — perhaps the least requited love there is, as well as the largest. It is an elegy, in the classic dual sense of lamentation and celebration, for our ambivalent relationship with this elemental truth and an homage to the supreme triumph of the human heart — the willingness to love that which does not and cannot love us back.

In this recording from the Academy of American Poets’ sixteenth annual Poetry & the Creative Mind, astrophysicist and author Janna Levin reads Auden’s sublime poem, with a lovely prefatory reflection on the bittersweet seductions and consolations of our unrequited love for the universe.

THE MORE LOVING ONE

by W.H. AudenLooking up at the stars, I know quite well

That, for all they care, I can go to hell,

But on earth indifference is the least

We have to dread from man or beast.How should we like it were stars to burn

With a passion for us we could not return?

If equal affection cannot be,

Let the more loving one be me.Admirer as I think I am

Of stars that do not give a damn,

I cannot, now I see them, say

I missed one terribly all day.Were all stars to disappear or die,

I should learn to look at an empty sky

And feel its total dark sublime,

Though this might take me a little time.

Complement with Levin’s beautiful readings of Maya Angelou’s cosmic clarion call to humanity, Adrienne Rich’s tribute to the world’s first professional female astronomer, and Ursula K. Le Guin’s ode to time, then revisit Auden on writing, true and false enchantment, and the political power of art. For a different side to the poetics of asymmetrical yet profoundly beautiful love, savor Emily Dickinson’s electric love letters to Susan Gilbert, excerpted from Figuring.

“Death is our friend precisely because it brings us into absolute and passionate presence with all that is here, that is natural, that is love,” Rilke wrote in contemplating the most difficult and rewarding existential art: befriending our own finitude. I have been sitting with Rilke, awash in the tidal waves of sorrow and love, in the wake of losing my beloved friend Emily Levine (October 23, 1944–February 3, 2019) — philosopher, comedian, universe-builder, beautiful soul — who made me fall in love with poetry long ago and without whom there would be no Universe in Verse and no Figuring. (Emily rightfully occupies the first line of the book’s acknowledgements.)

Ever since her terminal diagnosis in 2016, and up until just three weeks before her death, I have been taking Emily for what we came to call our “poetry retreats” — brief periodic respites by the ocean, where we would spend unhurried time in the company of a few other beloved women, reading poetry, cooking, conversing, and just being — with our joys, with our sorrows, with one another. Emily — the most erudite and intellectually voracious person I have ever known — introduced us to classics, many of which she knew by heart: Whitman, Eliot, Yeats, Plath, Rilke. But there was one contemporary poem she especially loved and read for us often: “You Can’t Have It All” by Barbara Ras, from her exquisite and exquisitely titled 1998 poetry collection Bite Every Sorrow (public library).

Now that Emily has returned her stardust to the universe she so cherished, and all the words seem too small to fill the void, poetry stands as the only mode of remembrance that can give shape and space to the amorphous largeness of feeling that is grief. In this sweetly lo-fi recording from one of our gatherings, punctuated by the sound of the ocean and the rustle of page-turning, Emily reads the poem that she, in the deepest sense, lived out and modeled for the rest of us with her largehearted life.

YOU CAN’T HAVE IT ALL

But you can have the fig tree and its fat leaves like clown hands

gloved with green. You can have the touch of a single eleven-year-old finger

on your cheek, waking you at one a.m. to say the hamster is back.

You can have the purr of the cat and the soulful look

of the black dog, the look that says, If I could I would bite

every sorrow until it fled, and when it is August,

you can have it August and abundantly so. You can have love,

though often it will be mysterious, like the white foam

that bubbles up at the top of the bean pot over the red kidneys

until you realize foam’s twin is blood.

You can have the skin at the center between a man’s legs,

so solid, so doll-like. You can have the life of the mind,

glowing occasionally in priestly vestments, never admitting pettiness,

never stooping to bribe the sullen guard who’ll tell you

all roads narrow at the border.

You can speak a foreign language, sometimes,

and it can mean something. You can visit the marker on the grave

where your father wept openly. You can’t bring back the dead,

but you can have the words forgive and forget hold hands

as if they meant to spend a lifetime together. And you can be grateful

for makeup, the way it kisses your face, half spice, half amnesia, grateful

for Mozart, his many notes racing one another towards joy, for towels

sucking up the drops on your clean skin, and for deeper thirsts,

for passion fruit, for saliva. You can have the dream,

the dream of Egypt, the horses of Egypt and you riding in the hot sand.

You can have your grandfather sitting on the side of your bed,

at least for a while, you can have clouds and letters, the leaping

of distances, and Indian food with yellow sauce like sunrise.

You can’t count on grace to pick you out of a crowd

but here is your friend to teach you how to high jump,

how to throw yourself over the bar, backwards,

until you learn about love, about sweet surrender,

and here are periwinkles, buses that kneel, farms in the mind

as real as Africa. And when adulthood fails you,

you can still summon the memory of the black swan on the pond

of your childhood, the rye bread with peanut butter and bananas

your grandmother gave you while the rest of the family slept.

There is the voice you can still summon at will, like your mother’s,

it will always whisper, you can’t have it all,

but there is this.

Complement with Emily’s splendid reading of “On the Fifth Day” by Jane Hirshfield, who often graced our poetry retreats with her Buddhist benediction of a presence, then revisit Mary Oliver — one of Emily’s favorite poets, whom she outlived by seventeen days — on the measure of a life well lived and how to live with maximal aliveness.

This essay is adapted from Figuring.

This is how I picture it:

A spindly middle-aged mathematician with a soaring mind, a sunken heart, and bad skin is being thrown about the back of a carriage in the bone-hollowing cold of a German January. Since his youth, he has been inscribing into family books and friendship albums his personal motto, borrowed from a verse by the ancient poet Perseus: “O the cares of man, how much of everything is futile.” He has weathered personal tragedies that would level most. He is now racing through the icy alabaster expanse of the countryside in the precarious hope of averting another: Four days after Christmas and two days after his forty-fourth birthday, a letter from his sister has informed him that their widowed mother is on trial for witchcraft — a fact for which he holds himself responsible.

He has written the world’s first work of science fiction — a clever allegory advancing the controversial Copernican model of the universe, describing the effects of gravity decades before Newton formalized it into a law, envisioning speech synthesis centuries before computers, and presaging space travel three hundred years before the Moon landing. The story, intended to counter superstition with science through symbol and metaphor inviting critical thinking, has instead effected the deadly indictment of his elderly, illiterate mother.

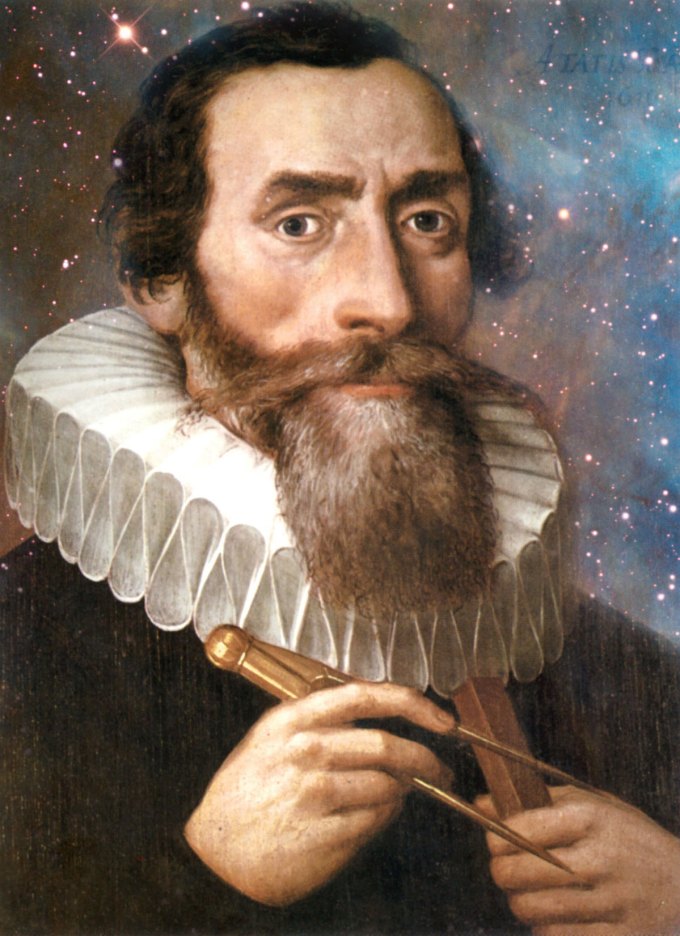

The year is 1617. His name is Johannes Kepler (December 27, 1571–November 15, 1630) — perhaps the unluckiest man in the world, perhaps the greatest scientist who ever lived.

Johannes Kepler

He inhabits a world in which God is mightier than nature, the Devil realer and more omnipresent than gravity. All around him, people believe that the sun revolves around the Earth every twenty-four hours, set into perfect circular motion by an omnipotent creator; the few who dare support the tendentious idea that the Earth rotates around its axis while revolving around the sun believe that it moves along a perfectly circular orbit. Kepler would disprove both beliefs, coin the word orbit, and quarry the marble out of which classical physics would be sculpted. He would be the first astronomer to develop a scientific method of predicting eclipses and the first to link mathematical astronomy to material reality — the first astrophysicist — by demonstrating that physical forces move the heavenly bodies in calculable ellipses. All of this he would accomplish while drawing horoscopes, espousing the spontaneous creation of new animal species rising from bogs and oozing from tree bark, and believing the Earth itself to be an ensouled body that has digestion, that suffers illness, that inhales and exhales like a living organism. Three centuries later, the marine biologist and writer Rachel Carson would reimagine a version of this view woven of science and stripped of mysticism as she makes ecology a household word.

Kepler’s life is a testament to how science does for reality what Plutarch’s thought experiment known as “the Ship of Theseus” does for the self. In the ancient Greek allegory, Theseus — the founder-king of Athens — sailed triumphantly back to the great city after slaying the mythic Minotaur on Crete. For a thousand years, his ship was maintained in the harbor of Athens as a living trophy and was sailed to Crete annually to reenact the victorious voyage. As time began to corrode the vessel, its components were replaced one by one — new planks, new oars, new sails — until no original part remained. Was it then, Plutarch asks, the same ship? There is no static, solid self. Throughout life, our habits, beliefs, and ideas evolve beyond recognition. Our physical and social environments change. Almost all of our cells are replaced. Yet we remain, to ourselves, “who” “we” “are.”

So with science: Bit by bit, discoveries reconfigure our understanding of reality. This reality is revealed to us only in fragments. The more fragments we perceive and parse, the more lifelike the mosaic we make of them. But it is still a mosaic, a representation — imperfect and incomplete, however beautiful it may be, and subject to unending transfiguration. Three centuries after Kepler, Lord Kelvin would take the podium at the British Association of Science in the year 1900 and declare: “There is nothing new to be discovered in physics now. All that remains is more and more precise measurement.” At the same moment in Zurich, the young Albert Einstein is incubating the ideas that would converge into his revolutionary conception of spacetime, irreversibly transfiguring our elemental understanding of reality.

Even the farthest seers can’t bend their gaze beyond their era’s horizon of possibility, but the horizon shifts with each incremental revolution as the human mind peers outward to take in nature, then turns inward to question its own givens. We sieve the world through the mesh of these certitudes, tautened by nature and culture, but every once in a while — whether by accident or conscious effort — the wire loosens and the kernel of a revolution slips through.

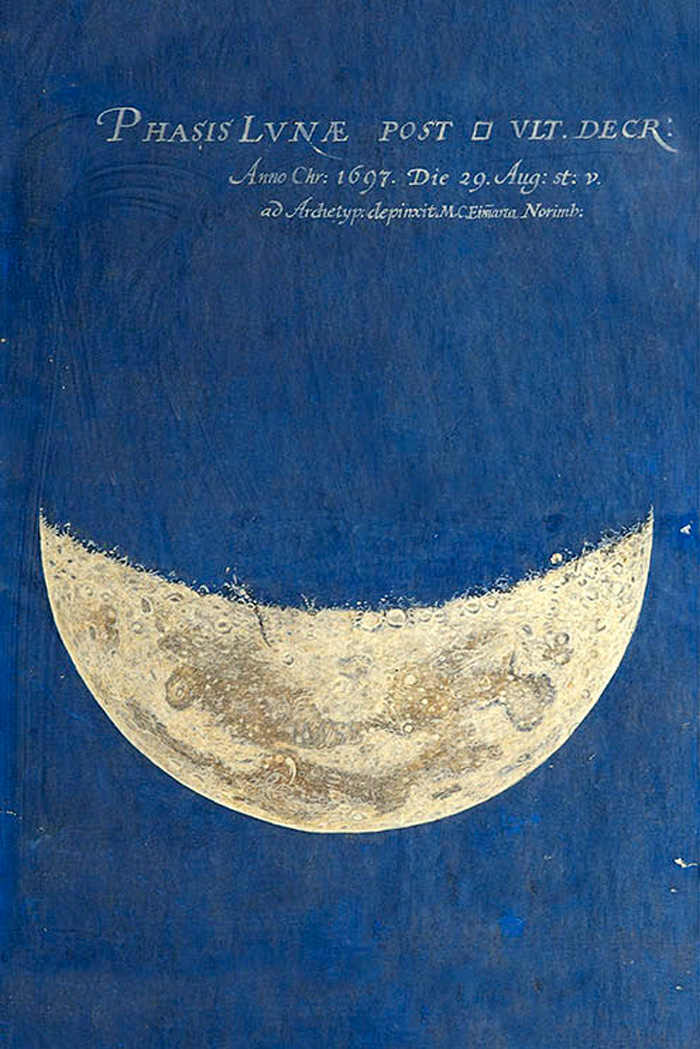

Painting of the Moon by the 17th-century German self-taught astronomer and artist Maria Clara Eimmart. (Available as a print.)

Kepler first came under the thrall of the heliocentric model as a student at the Lutheran University of Tübingen half a century after Copernicus published his theory. The twenty-two-year-old Kepler, studying to enter the clergy, wrote a dissertation about the Moon, aimed at demonstrating the Copernican claim that the Earth is moving simultaneously around its axis and around the sun. A classmate by the name of Christoph Besold — a law student at the university — was so taken with Kepler’s lunar paper that he proposed a public debate. The university promptly vetoed it. A couple of years later, Galileo would write to Kepler that he’d been a believer in the Copernican system himself “for many years” — and yet he hadn’t yet dared to stand up for it in public and wouldn’t for more than thirty years.

Kepler’s radical ideas rendered him too untrustworthy for the pulpit. After graduation, he was banished across the country to teach mathematics at a Lutheran seminary in Graz. But he was glad — he saw himself, mind and body, as cut out for scholarship. “I take from my mother my bodily constitution,” he would later write, “which is more suited to study than to other kinds of life.” Three centuries later, Walt Whitman would observe how beholden the mind is to the body, “how behind the tally of genius and morals stands the stomach, and gives a sort of casting vote.”

While Kepler saw his body as an instrument of scholarship, other bodies around him were being exploited as instruments of superstition. In Graz, he witnessed dramatic exorcisms performed on young women believed to be possessed by demons — grim public spectacles staged by the king and his clergy. He saw brightly colored fumes emanate from one woman’s belly and glistening black beetles crawl out of another’s mouth. He saw the deftness with which the puppeteers of the populace dramatized dogma to wrest control — the church was then the mass media, and the mass media were as unafraid of resorting to propaganda as they are today.

As religious persecution escalated — soon it would erupt into the Thirty Years’ War, the deadliest religious war in the Continent’s history — life in Graz became unlivable. Protestants were forced to marry by Catholic ritual and have their children baptized as Catholics. Homes were raided, heretical books confiscated and destroyed. When Kepler’s infant daughter died, he was fined for evading the Catholic clergy and not allowed to bury his child until he paid the charge. It was time to migrate — a costly and trying endeavor for the family, but Kepler knew there would be a higher price to pay for staying:

I may not regard loss of property more seriously than loss of opportunity to fulfill that for which nature and career have destined me.

Returning to Tübingen for a career in the clergy was out of the question:

I could never torture myself with greater unrest and anxiety than if I now, in my present state of conscience, should be enclosed in that sphere of activity.

Instead, Kepler reconsidered something he had initially viewed merely as a flattering compliment to his growing scientific reputation: an invitation to visit the prominent Danish astronomer Tycho Brahe in Bohemia, where he had just been appointed royal mathematician mathematician to the Holy Roman Emperor.

Tycho Brahe

Kepler made the arduous five-hundred-kilometer journey to Prague. On February 4, 1600, the famous Dane welcomed him warmly into the castle where he computed the heavens, his enormous orange mustache almost aglow with geniality. During the two months Kepler spent there as guest and apprentice, Tycho was so impressed with the young astronomer’s theoretical ingenuity that he permitted him to analyze the celestial observations he had been guarding closely from all other scholars, then offered him a permanent position. Kepler accepted gratefully and journeyed back to Graz to collect his family, arriving in a retrograde world even more riven by religious persecution. When the Keplers refused to convert to Catholicism, they were banished from the city — the migration to Prague, with all the privations it would require, was no longer optional. Shortly after Kepler and his family alighted in their new life in Bohemia, the valve between chance and choice opened again, and another sudden change of circumstance flooded in: Tycho died unexpectedly at the age of fifty-four. Two days later, Kepler was appointed his successor as imperial mathematician, inheriting Tycho’s data. Over the coming years, he would draw on it extensively in devising his three laws of planetary motion, which would revolutionize the human understanding of the universe.

How many revolutions does the cog of culture make before a new truth about reality catches into gear?

Three centuries before Kepler, Dante had marveled in his Divine Comedy at the new clocks ticking in England and Italy: “One wheel moves and drives the other.” This marriage of technology and poetry eventually gave rise to the metaphor of the clockwork universe. Before Newton’s physics placed this metaphor at the ideological epicenter of the Enlightenment, Kepler bridged the poetic and the scientific. In his first book, The Cosmographic Mystery, Kepler picked up the metaphor and stripped it of its divine dimensions, removing God as the clockmaster and instead pointing to a single force operating the heavens: “The celestial machine,” he wrote, “is not something like a divine organism, but rather something like a clockwork in which a single weight drives all the gears.” Within it, “the totality of the complex motions is guided by a single magnetic force.” It was not, as Dante wrote, “love that moves the sun and other stars” — it was gravity, as Newton would later formalize this “single magnetic force.” But it was Kepler who thus formulated for the first time the very notion of a force — something that didn’t exist for Copernicus, who, despite his groundbreaking insight that the sun moves the planets, still conceived of that motion in poetic rather than scientific terms. For him, the planets were horses whose reins the sun held; for Kepler, they were gears the sun wound by a physical force.

In the anxious winter of 1617, unfigurative wheels are turning beneath Johannes Kepler as he hastens to his mother’s witchcraft trial. For this long journey by horse and carriage, Kepler has packed a battered copy of Dialogue on Ancient and Modern Music by Vincenzo Galilei, his sometime friend Galileo’s father — one of the era’s most influential treatises on music, a subject that always enchanted Kepler as much as mathematics, perhaps because he never saw the two as separate. Three years later, he would draw on it in composing his own groundbreaking book The Harmony of the World, in which he would formulate his third and final law of planetary motion, known as the harmonic law — his exquisite discovery, twenty-two years in the making, of the proportional link between a planet’s orbital period and the length of the axis of its orbit. It would help compute, for the first time, the distance of the planets from the sun — the measure of the heavens in an era when the Solar System was thought to be all there was.

As Kepler is galloping through the German countryside to prevent his mother’s execution, the Inquisition in Rome is about to declare the claim of Earth’s motion heretical — a heresy punishable by death.

Behind him lies a crumbled life: Emperor Rudolph II is dead — Kepler is no longer royal mathematician and chief scientific adviser to the Holy Roman Emperor, a job endowed with Europe’s highest scientific prestige, though primarily tasked with casting horoscopes for royalty; his beloved six-year-old son is dead — “a hyacinth of the morning in the first day of spring” wilted by smallpox, a disease that had barely spared Kepler himself as a child, leaving his skin cratered by scars and his eyesight permanently damaged; his first wife is dead, having come unhinged by grief before succumbing to the pox herself.

Before him lies the collision of two worlds in two world systems, the spark of which would ignite the interstellar imagination.

SOMNIUM?

In 1609, Johannes Kepler finished the first work of genuine science fiction — that is, imaginative storytelling in which sensical science is a major plot device. Somnium, or The Dream, is the fictional account of a young astronomer who voyages to the Moon. Rich in both scientific ingenuity and symbolic play, it is at once a masterwork of the literary imagination and an invaluable scientific document, all the more impressive for the fact that it was written before Galileo pointed the first spyglass at the sky and before Kepler himself had ever looked through a telescope.

Kepler knew what we habitually forget — that the locus of possibility expands when the unimaginable is imagined and then made real through systematic effort. Centuries later, in a 1971 conversation with Carl Sagan and Arthur C. Clarke about the future of space exploration, science fiction patron saint Ray Bradbury would capture this transmutation process perfectly: “It’s part of the nature of man to start with romance and build to a reality.” Like any currency of value, the human imagination is a coin with two inseparable sides. It is our faculty of fancy that fills the disquieting gaps of the unknown with the tranquilizing certitudes of myth and superstition, that points to magic and witchcraft when common sense and reason fail to unveil causality. But that selfsame faculty is also what leads us to rise above accepted facts, above the limits of the possible established by custom and convention, and reach for new summits of previously unimagined truth. Which way the coin flips depends on the degree of courage, determined by some incalculable combination of nature, culture, and character.

In a letter to Galileo containing the first written mention of The Dream’s existence and penned in the spring of 1610 — a little more than a century after Columbus voyaged to the Americas — Kepler ushers his correspondent’s imagination toward fathoming the impending reality of interstellar travel by reminding him just how unimaginable transatlantic travel had seemed not so long ago:

Who would have believed that a huge ocean could be crossed more peacefully and safely than the narrow expanse of the Adriatic, the Baltic Sea or the English Channel?

Kepler envisions that once “sails or ships fit to survive the heavenly breezes” are invented, voyagers would no longer fear the dark emptiness of interstellar space. With an eye to these future explorers, he issues a solidary challenge:

So, for those who will come shortly to attempt this journey, let us establish the astronomy: Galileo, you of Jupiter, I of the moon.

Painting of the Moon by the 17th-century German self-taught astronomer and artist Maria Clara Eimmart. (Available as a print.)

Newton would later refine Kepler’s three laws of motion with his formidable calculus and richer understanding of the underlying force as the foundation of Newtonian gravity. In a quarter millennium, the mathematician Katherine Johnson would draw on these laws in computing the trajectory that lands Apollo 11 on the Moon. They would guide the Voyager spacecraft, the first human-made object to sail into interstellar space.

In The Dream, which Kepler described in his letter to Galileo as a “lunar geography,” the young traveler lands on the Moon to find that lunar beings believe Earth revolves around them — from their cosmic vantage point, our pale blue dot rises and sets against their firmament, something reflected even in the name they have given Earth: Volva. Kepler chose the name deliberately, to emphasize the fact of Earth’s revolution — the very motion that made Copernicanism so dangerous to the dogma of cosmic stability. Assuming that the reader is aware that the Moon revolves around the Earth — an anciently observed fact, thoroughly uncontroversial by his day — Kepler intimates the unnerving central question: Could it be, his story suggests in a stroke of allegorical genius predating Edwin Abbott Abbott’s Flatland by nearly three centuries, that our own certitude about Earth’s fixed position in space is just as misguided as the lunar denizens’ belief in Volva’s revolution around them? Could we, too, be revolving around the sun, even though the ground feels firm and motionless beneath our feet?

The Dream was intended to gently awaken people to the truth of Copernicus’s disconcerting heliocentric model of the universe, defying the long-held belief that Earth is the static center of an immutable cosmos. But earthlings’ millennia-long slumber was too deep for The Dream — a deadly somnolence, for it resulted in Kepler’s elderly mother’s being accused of witchcraft. Tens of thousands of people would be tried for witchcraft by the end of the persecution in Europe, dwarfing the two dozen who would render Salem synonymous with witchcraft trials seven decades later. Most of the accused were women, whose inculpation or defense fell on their sons, brothers, and husbands. Most of the trials ended in execution. In Germany, some twenty-five thousand were killed. In Kepler’s sparsely populated hometown alone, six women had been burned as witches just a few weeks before his mother was indicted.

An uncanny symmetry haunts Kepler’s predicament — it was Katharina Kepler who had first enchanted her son with astronomy when she took him to the top of a nearby hill and let the six-year-old boy gape in wonderment as the Great Comet of 1577 blazed across the sky.

Art from The Comet Book, 1587. (Available as a print.)

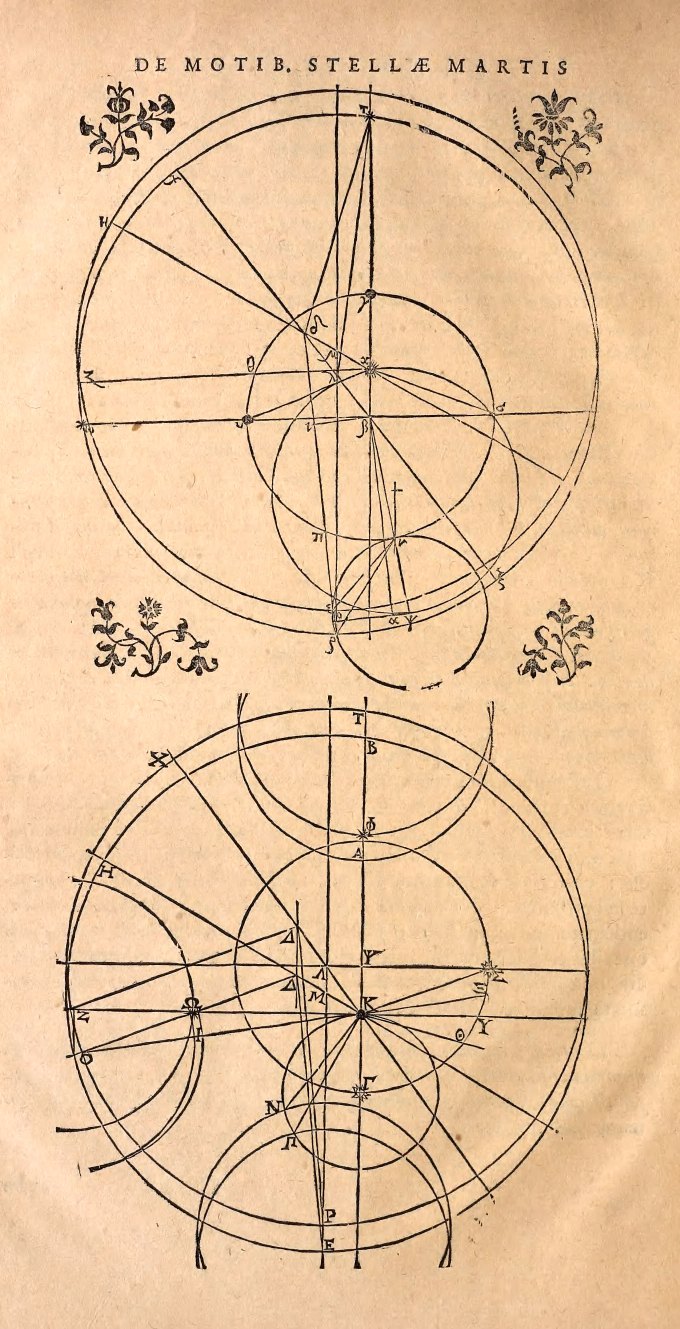

By the time he wrote The Dream, Kepler was one of the most prominent scientists in the world. His rigorous fidelity to observational data harmonized with a symphonic imagination. Drawing on Tycho’s data, Kepler devoted a decade and more than seventy failed trials to calculating the orbit of Mars, which became the yardstick for measuring the heavens. Having just formulated the first of his laws, demolishing the ancient belief that the heavenly bodies obey uniform circular motion, Kepler demonstrated that the planets orbit the sun at varying speeds along ellipses. Unlike previous models, which were simply mathematical hypotheses, Kepler discovered the actual orbit by which Mars moved through space, then used the Mars data to determine Earth’s orbit. Taking multiple observations of Mars’s position relative to Earth, he examined how the angle between the two planets changed over the course of the orbital period he had already calculated for Mars: 687 days. To do this, Kepler had to project himself onto Mars with an empathic leap of the imagination. The word empathy would come into popular use three centuries later, through the gateway of art, when it entered the modern lexicon in the early twentieth century to describe the imaginative act of projecting oneself into a painting in an effort to understand why art moves us. Through science, Kepler had projected himself into the greatest work of art there is in an effort to understand how nature draws its laws to move the planets, including the body that moves us through space. Using trigonometry, he calculated the distance between Earth and Mars, located the center of Earth’s orbit, and went on to demonstrate that all the other planets also moved along elliptical orbits, thus demolishing the foundation of Greek astronomy — uniform circular motion — and effecting a major strike against the Ptolemaic model.

The orbital motion of Mars, from Kepler’s Astronomia Nova. (Available as a print.)

Kepler published these revelatory results, which summed up his first two laws, in his book Astronomia nova — The New Astronomy. That is exactly what it was — the nature of the cosmos had forever changed, and so had our place in it. “Through my effort God is being celebrated in astronomy,” Kepler wrote to his former professor, reflecting on having traded a career in theology for the conquest of a greater truth.

By the time of Astronomia nova, Kepler had ample mathematical evidence affirming Copernicus’s theory. But he realized something crucial and abiding about human psychology: The scientific proof was too complex, too cumbersome, too abstract to persuade even his peers, much less the scientifically illiterate public; it wasn’t data that would dismantle their celestial parochialism, but storytelling. Three centuries before the poet Muriel Rukeyser wrote that “the universe is made of stories, not of atoms,” Kepler knew that whatever the composition of the universe may be, its understanding was indeed the work of stories, not of science — that what he needed was a new rhetoric by which to illustrate, in a simple yet compelling way, that the Earth is indeed in motion. And so The Dream was born.

Even in medieval times, the Frankfurt Book Fair was one of the world’s most fecund literary marketplaces. Kepler attended it frequently in order to promote his own books and to stay informed about other important scientific publications. He brought the manuscript of The Dream with him to this safest possible launchpad, where the other attendees, in addition to being well aware of the author’s reputation as a royal mathematician and astronomer, were either scientists themselves or erudite enough to appreciate the story’s clever allegorical play on science. But something went awry: Sometime in 1611, the sole manuscript fell into the hands of a wealthy young nobleman and made its way across Europe. By Kepler’s account, it even reached John Donne and inspired his ferocious satire of the Catholic Church, Ignatius His Conclave. Circulated via barbershop gossip, versions of the story had reached minds far less literary, or even literate, by 1615. These garbled retellings eventually made their way to Kepler’s home duchy.

“Once a poem is made available to the public, the right of interpretation belongs to the reader,” young Sylvia Plath would write to her mother three centuries later. But interpretation invariably reveals more about the interpreter than about the interpreted. The gap between intention and interpretation is always rife with wrongs, especially when writer and reader occupy vastly different strata of emotional maturity and intellectual sophistication. The science, symbolism, and allegorical virtuosity of The Dream were entirely lost on the illiterate, superstitious, and vengeful villagers of Kepler’s hometown. Instead, they interpreted the story with the only tool at their disposal — the blunt weapon of the literal shorn of context. They were especially captivated by one element of the story: The narrator is a young astronomer who describes himself as “by nature eager for knowledge” and who had apprenticed with Tycho Brahe. By then, people far and wide knew of Tycho’s most famous pupil and imperial successor. Perhaps it was a point of pride for locals to have produced the famous Johannes Kepler, perhaps a point of envy. Whatever the case, they immediately took the story to be not fiction but autobiography. This was the seedbed of trouble: Another main character was the narrator’s mother — an herb doctor who conjures up spirits to assist her son in his lunar voyage. Kepler’s own mother was an herb doctor.

Whether what happened next was the product of intentional malevolent manipulation or the unfortunate workings of ignorance is hard to tell. My own sense is that one aided the other, as those who stand to gain from the manipulation of truth often prey on those bereft of critical thinking. According to Kepler’s subsequent account, a local barber overheard the story and seized upon the chance to cast Katharina Kepler as a witch — an opportune accusation, for the barber’s sister Ursula had a bone to pick with the elderly woman, a disavowed friend. Ursula Reinhold had borrowed money from Katharina Kepler and never repaid it. She had also confided in the old widow about having become pregnant by a man other than her husband. In an act of unthinking indiscretion, Katharina had shared this compromising information with Johannes’s younger brother, who had then just as unthinkingly circulated it around the small town. To abate scandal, Ursula had obtained an abortion. To cover up the brutal corporeal aftermath of this medically primitive procedure, she blamed her infirmity on a spell — cast against her, she proclaimed, by Katharina Kepler. Soon Ursula persuaded twenty-four suggestible locals to give accounts of the elderly woman’s sorcery — one neighbor claimed that her daughter’s arm had grown numb after Katharina brushed against it in the street; the butcher’s wife swore that pain pierced her husband’s thigh when Katharina walked by; the limping schoolmaster dated the onset of his disability to a night ten years earlier when he had taken a sip from a tin cup at Katharina’s house while reading her one of Kepler’s letters. She was accused of appearing magically through closed doors, of having caused the deaths of infants and animals. The Dream, Kepler believed, had furnished the superstition-hungry townspeople with evidence of his mother’s alleged witchcraft — after all, her own son had depicted her as a sorcerer in his story, the allegorical nature of which eluded them completely.

For her part, Katharina Kepler didn’t help her own case. Prickly in character and known to brawl, she first tried suing Ursula for slander — a strikingly modern American approach but, in medieval Germany, effective only in stoking the fire, for Ursula’s well-connected family had ties to local authorities. Then she tried bribing the magistrate into dismissing her case by offering him a silver chalice, which was promptly interpreted as an admission of guilt, and the civil case was escalated to a criminal trial for witchcraft.

In the midst of this tumult, Kepler’s infant daughter, named for his mother, died of epilepsy, followed by another son, four years old, of smallpox.

Having taken his mother’s defense upon himself as soon as he first learned of the accusation, the bereaved Kepler devoted six years to the trial, all the while trying to continue his scientific work and to see through the publication of the major astronomical catalog he had been composing since he inherited Tycho’s data. Working remotely from Linz, Kepler first wrote various petitions on Katharina’s behalf, then mounted a meticulous legal defense in writing. He requested trial documentation of witness testimonies and transcripts of his mother’s interrogations. He then journeyed across the country once more, sitting with Katharina in prison and talking with her for hours on end to assemble information about the people and events of the small town he had left long ago. Despite the allegation that she was demented, the seventy-something Katharina’s memory was astonishing — she recalled in granular detail incidents that had taken place years earlier.

Kepler set out to disprove each of the forty-nine “points of disgrace” hurled against his mother, using the scientific method to uncover the natural causes behind the supernatural evils she had allegedly wrought on the townspeople. He confirmed that Ursula had had an abortion, that the teenaged girl had numbed her arm by carrying too many bricks, that the schoolmaster had lamed his leg by tripping into a ditch, that the butcher suffered from lumbago.

None of Kepler’s epistolary efforts at reason worked. Five years into the ordeal, an order for Katharina’s arrest was served. In the small hours of an August night, armed guards barged into her daughter’s house and found Katharina, who had heard the disturbance, hiding in a wooden linen chest — naked, as she often slept during the hot spells of summer. By one account, she was permitted to clothe herself before being taken away; by another, she was carried out disrobed inside the trunk to avoid a public disturbance and hauled to prison for another interrogation. So gratuitous was the fabrication of evidence that even Katharina’s composure through the indignities was held against her — the fact that she didn’t cry during the proceedings was cited as proof of unrepentant liaison with the Devil. Kepler had to explain to the court that he had never seen his stoic mother shed a single tear — not when his father left in Johannes’s childhood, not during the long years Katharina spent raising her children alone, not in the many losses of old age.

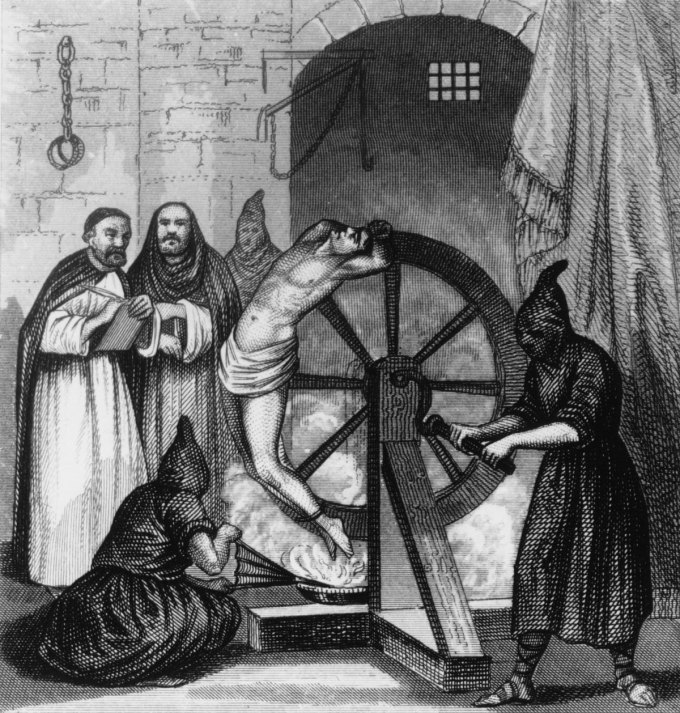

Katharina was threatened with being stretched on a wheel — a diabolical device commonly used to extract confessions — unless she admitted to sorcery. This elderly woman, who had outlived her era’s life expectancy by decades, would spend the next fourteen months imprisoned in a dark room, sitting and sleeping on the stone floor to which she was shackled with a heavy iron chain. She faced the threats with self-possession and confessed nothing.

The breaking wheel

In a last recourse, Kepler uprooted his entire family, left his teaching position, and traveled again to his hometown as the Thirty Years’ War raged on. I wonder if he wondered during that dispiriting journey why he had written The Dream in the first place, wondered whether the price of any truth is to be capped at so great a personal cost.

Long ago, as a student at Tübingen, Kepler had read Plutarch’s The Face on the Moon — the mythical story of a traveler who sails to a group of islands north of Britain inhabited by people who know secret passages to the Moon. There is no science in Plutarch’s story — it is pure fantasy. And yet it employs the same simple, clever device that Kepler himself would use in The Dream fifteen centuries later to unsettle the reader’s anthropocentric bias: In considering the Moon as a potential habitat for life, Plutarch pointed out that the idea of life in saltwater seems unfathomable to air-breathing creatures such as ourselves, and yet life in the oceans exists. It would be another eighteen centuries before we would fully awaken not only to the fact of marine life but to the complexity and splendor of this barely fathomable reality when Rachel Carson pioneered a new aesthetic of poetic science writing, inviting the human reader to consider Earth from the nonhuman perspective of sea creatures.

Kepler first read Plutarch’s story in 1595, but it wasn’t until the solar eclipse of 1605, the observations of which first gave him the insight that the orbits of the planets were ellipses rather than circles, that he began seriously considering the allegory as a means of illustrating Copernican ideas. Where Plutarch had explored space travel as metaphysics, Kepler made it a sandbox for real physics, exploring gravity and planetary motion. In writing about the takeoff of his imaginary spaceship, for instance, he makes clear that he has a theoretical model of gravity factoring in the demands that breaking away from Earth’s gravitational grip would place on cosmic voyagers. He goes on to add that while leaving Earth’s gravitational pull would be toilsome, once the spaceship is in the gravity-free “aether,” hardly any force would be needed to keep it in motion — an early understanding of inertia in the modern sense, predating by decades Newton’s first law of motion, which states that a body will move at a steady velocity unless acted upon by an outside force.

In a passage at once insightful and amusing, Kepler describes the physical requirements for his lunar travelers — a prescient description of astronaut training:

No inactive persons are accepted…no fat ones; no pleasure-loving ones; we choose only those who have spent their lives on horseback, or have shipped often to the Indies and are accustomed to subsisting on hardtack, garlic, dried fish and unpalatable fare.

Three centuries later, the early polar explorer Ernest Shackleton would post a similar recruitment ad for his pioneering Antarctic expedition:

Men wanted for hazardous journey, small wages, bitter cold, long months of complete darkness, constant danger, safe return doubtful, honor and recognition in case of success.

When a woman named Peggy Peregrine expressed interest on behalf of an eager female trio, Shackleton dryly replied: “There are no vacancies for the opposite sex on the expedition.” Half a century later, the Russian cosmonaut Valentina Tereshkova would become the first woman to exit Earth’s atmosphere on a spacecraft guided by Kepler’s laws.

After years of exerting reason against superstition, Kepler ultimately succeeded in getting his mother acquitted. But the seventy-five-year-old woman never recovered from the trauma of the trial and the bitter German winter spent in the unheated prison. On April 13, 1622, shortly after she was released, Katharina Kepler died, adding to her son’s litany of losses. A quarter millennium later, Emily Dickinson would write in a poem the central metaphor of which draws on Kepler’s legacy:

Each that we lose takes part of us;

A crescent still abides,

Which like the moon, some turbid night,

Is summoned by the tides.

Partial eclipse of the Moon — one of French artist Étienne Léopold Trouvelot’s astronomical drawings. (Available as a print.)

A few months after his mother’s death, Kepler received a letter from Christoph Besold — the classmate who had stuck up for his lunar dissertation thirty years earlier, now a successful attorney and professor of law. Having witnessed Katharina’s harrowing fate, Besold had worked to expose the ignorance and abuses of power that sealed it, procuring a decree from the duke of Kepler’s home duchy prohibiting any other witchcraft trials unsanctioned by the Supreme Court in the urban and presumably far less superstitious Stuttgart. “While neither your name nor that of your mother is mentioned in the edict,” Besold wrote to his old friend, “everyone knows that it is at the bottom of it. You have rendered an inestimable service to the whole world, and someday your name will be blessed for it.”

Kepler was unconsoled by the decree — perhaps he knew that policy change and cultural change are hardly the same thing, existing on different time scales. He spent the remaining years of his life obsessively annotating The Dream with two hundred twenty-three footnotes — a volume of hypertext equal to the story itself — intended to dispel superstitious interpretations by delineating his exact scientific reasons for using the symbols and metaphors he did.

In his ninety-sixth footnote, Kepler plainly stated “the hypothesis of the whole dream”: “an argument for the motion of the Earth, or rather a refutation of arguments constructed, on the basis of perception, against the motion of the Earth.” Fifty footnotes later, he reiterated the point by asserting that he envisioned the allegory as “a pleasant retort” to Ptolemaic parochialism. In a trailblazing systematic effort to unmoor scientific truth from the illusions of commonsense perception, he wrote:

Everyone says it is plain that the stars go around the earth while the Earth remains still. I say that it is plain to the eyes of the lunar people that our Earth, which is their Volva, goes around while their moon is still. If it be said that the lunatic perceptions of my moon-dwellers are deceived, I retort with equal justice that the terrestrial senses of the Earth-dwellers are devoid of reason.

Copernicus’s heliocentric universe, 1543.

In another footnote, Kepler defined gravity as “a power similar to magnetic power — a mutual attraction,” and described its chief law:

The attractive power is greater in the case of two bodies that are near to each other than it is in the case of bodies that are far apart. Therefore, bodies more strongly resist separation one from the other when they are still close together.

A further footnote pointed out that gravity is a universal force affecting bodies beyond the Earth, and that lunar gravity is responsible for earthly tides: “The clearest evidence of the relationship between earth and the moon is the ebb and flow of the seas.” This fact, which became central to Newton’s laws and which is now so commonplace that schoolchildren point to it as plain evidence of gravity, was far from accepted in Kepler’s scientific community. Galileo, who was right about so much, was also wrong about so much — something worth remembering as we train ourselves in the cultural acrobatics of nuanced appreciation without idolatry. Galileo believed, for instance, that comets were vapors of the earth — a notion Tycho Brahe disproved by demonstrating that comets are celestial objects moving through space along computable trajectories after observing the very comet that had made six-year-old Kepler fall in love with astronomy. Galileo didn’t merely deny that tides were caused by the Moon — he went as far as to mock Kepler’s assertion that they do. “That concept is completely repugnant to my mind,” he wrote — not even in a private letter but in his landmark Dialogue on the Two Chief World Systems — scoffing that “though [Kepler] has at his fingertips the motions attributed to the Earth, he has nevertheless lent his ear and his assent to the Moon’s dominion over the waters, to occult properties, and to such puerilities.”

Kepler took particular care with the portion of the allegory he saw as most directly responsible for his mother’s witchcraft trial — the appearance of nine spirits, summoned by the protagonist’s mother. In a footnote, he explained that these symbolize the nine Greek muses. In one of the story’s more cryptic sentences, Kepler wrote of these spirits: “One, particularly friendly to me, most gentle and purest of all, is called forth by twenty-one characters.” In his subsequent defense in footnotes, he explained that the phrase “twenty-one characters” refers to the number of letters used to spell Astronomia Copernicana. The friendliest spirit represents Urania — the ancient Greek muse of astronomy, which Kepler considered the most reliable of the sciences:

Although all the sciences are gentle and harmless in themselves (and on that account they are not those wicked and good-for-nothing spirits with whom witches and fortune-tellers have dealings…), this is especially true of astronomy because of the very nature of its subject matter.

Urania, the ancient Greek muse of astronomy, as depicted in an 1885 Italian book of popular astronomy. (Available as a print.)

When the astronomer William Herschel discovered the seventh planet from the sun a century and a half later, he named it Uranus, after the same muse. Elsewhere in Germany, a young Beethoven heard of the discovery and wondered in the marginalia of one of his compositions: “What will they think of my music on the star of Urania?” Another two centuries later, when Ann Druyan and Carl Sagan compose the Golden Record as a portrait of humanity in sound and image, Beethoven’s Fifth Symphony sails into the cosmos aboard the Voyager spacecraft alongside a piece by the composer Laurie Spiegel based on Kepler’s Harmony of the World.

Kepler was unambiguous about the broader political intent of his allegory. The year after his mother’s death, he wrote to an astronomer friend:

Would it be a great crime to paint the cyclopian morals of this period in livid colors, but for the sake of caution, to depart from the earth with such writing and secede to the moon?

Isn’t it better, he wonders in another stroke of psychological genius, to illustrate the monstrosity of people’s ignorance by way of the ignorance of imaginary others? He hoped that by seeing the absurdity of the lunar people’s belief that the Moon is the center of the universe, the inhabitants of Earth would have the insight and integrity to question their own conviction of centrality. Three hundred fifty years later, when fifteen prominent poets are asked to contribute a “statement on poetics” for an influential anthology, Denise Levertov — the only woman of the fifteen — would state that poetry’s highest task is “to awaken sleepers by other means than shock.” This must have been what Kepler aimed to do with The Dream — his serenade to the poetics of science, aimed at awakening.

In the wake of his mother’s witchcraft trial, Kepler made another observation centuries ahead of its time, even ahead of the seventeenth-century French philosopher François Poullain de la Barre’s landmark assertion that “the mind has no sex.” In Kepler’s time, long before the discovery of genetics, it was believed that children bore a resemblance to their mothers, in physiognomy and character, because they were born under the same constellation. But Kepler was keenly aware of how different he and Katharina were as people, how divergent their worldviews and their fates — he, a meek leading scientist about to turn the world over; she, a mercurial, illiterate woman on trial for witchcraft. If the horoscopes he had once drawn for a living did not determine a person’s life-path, Kepler couldn’t help but wonder what did — here was a scientist in search of causality. A quarter millennium before social psychology existed as a formal field of study, he reasoned that what had gotten his mother into all this trouble in the first place — her ignorant beliefs and behaviors taken for the work of evil spirits, her social marginalization as a widow — was the fact that she had never benefited from the education her son, as a man, had received. In the fourth section of The Harmony of the World — his most daring and speculative foray into natural philosophy — Kepler writes in a chapter devoted to “metaphysical, psychological, and astrological” matters:

I know a woman who was born under almost the same aspects, with a temperament which was certainly very restless, but by which she not only has no advantage in book learning (that is not surprising in a woman) but also disturbs the whole of her town, and is the author of her own lamentable misfortune.

In the very next sentence, Kepler identifies the woman in question as his own mother and proceeds to note that she never received the privileges he did. “I was born a man, not a woman,” he writes, “a difference in sex which the astrologers seek in vain in the heavens.” The difference between the fate of the sexes, Kepler suggests, is not in the heavens but in the earthly construction of gender as a function of culture. It was not his mother’s nature that made her ignorant, but the consequences of her social standing in a world that rendered its opportunities for intellectual illumination and self-actualization as fixed as the stars.

Read other excerpts from Figuring here; read more about the book’s overarching aboutness here.

| By ELIZA COOK |

This poem is in the public domain. Published in Poem-a-Day on December 29, 2019, by the Academy of American Poets.

About this Poem “Song for the New Year” originally appeared in Melaia and Other Poems (Charles Tilt, 1840).