“The sage shines but does not dazzle.”

–Lao Tzu in the Tao Te Ching

Azrya Cohen Bequer · Mar 23 · Medium.com

An Empowering Message to Humanity from Ayahuasca

No matter how complex a question I may have, when I take it to psychedelics I always receive an answer. So when the Coronavirus pandemic kicked into high gear, I brought the subject to my trusted mentor Ayahuasca to be illuminated.

Link to complete article: https://medium.com/@azrya/what-psychedelics-told-me-about-the-coronavirus-730a4a6b9714

To be human is to be a miracle of evolution conscious of its own miraculousness — a consciousness beautiful and bittersweet, for we have paid for it with a parallel awareness not only of our fundamental improbability but of our staggering fragility, of how physiologically precarious our survival is and how psychologically vulnerable our sanity. To make that awareness bearable, we have evolved a singular faculty that might just be the crowning miracle of our consciousness: hope.

Hope — and the wise, effective action that can spring from it — is the counterweight to the heavy sense of our own fragility. It is a continual negotiation between optimism and despair, a continual negation of cynicism and naïveté. We hope precisely because we are aware that terrible outcomes are always possible and often probable, but that the choices we make can impact the outcomes.

Art by the Brothers Hilts from A Velocity of Being: Letters to a Young Reader.

How to harness that uniquely human paradox in living more empowered lives in even the most vulnerable-making circumstances is what the great humanistic philosopher and psychologist Erich Fromm (March 23, 1900–March 18, 1980) explores in the 1968 gem The Revolution of Hope: Toward a Humanized Technology (public library), written in an era when both hope and fear were at a global high, by a German Jew who had narrowly escaped a dismal fate by taking refuge first in Switzerland and then in America when the Nazis seized power.

Erich Fromm

In a sentiment he would later develop in contemplating the superior alternative to the parallel lazinesses of optimism and pessimism, Fromm writes:

Hope is a decisive element in any attempt to bring about social change in the direction of greater aliveness, awareness, and reason. But the nature of hope is often misunderstood and confused with attitudes that have nothing to do with hope and in fact are the very opposite.

Half a century before the physicist Brian Greene made his poetic case for our sense of mortality as the wellspring of meaning in our ephemeral lives, Fromm argues that our capacity for hope — which has furnished the greatest achievements of our species — is rooted in our vulnerable self-consciousness. Writing well before Ursula K. Le Guin’s brilliant unsexing of the universal pronoun, Fromm (and all of his contemporaries and predecessors, male and female, trapped in the linguistic convention of their time) may be forgiven for using man as shorthand for the generalized human being:

Man, lacking the instinctual equipment of the animal, is not as well equipped for flight or for attack as animals are. He does not “know” infallibly, as the salmon knows where to return to the river in order to spawn its young and as many birds know where to go south in the winter and where to return in the summer. His decisions are not made for him by instinct. He has to make them. He is faced with alternatives and there is a risk of failure in every decision he makes. The price that man pays for consciousness is insecurity. He can stand his insecurity by being aware and accepting the human condition, and by the hope that he will not fail even though he has no guarantee for success. He has no certainty; the only certain prediction he can make is: “I shall die.”

What makes us human is not the fact of that elemental vulnerability, which we share with all other living creatures, but the awareness of that fact — the way existential uncertainty worms the consciousness capable of grasping it. But in that singular fragility lies, also, our singular resilience as thinking, feeling animals capable of foresight and of intelligent, sensitive decision-making along the vectors of that foresight.

Illustration by Margaret C. Cook for a rare 1913 edition of Leaves of Grass by Walt Whitman. (Available as a print.)

Fromm writes:

Man is born as a freak of nature, being within nature and yet transcending it. He has to find principles of action and decision making which replace the principles of instinct. He has to have a frame of orientation that permits him to organize a consistent picture of the world as a condition for consistent actions. He has to fight not only against the dangers of dying, starving, and being hurt, but also against another danger that is specifically human: that of becoming insane. In other words, he has to protect himself not only against the danger of losing his life but also against the danger of losing his mind. The human being, born under the conditions described here, would indeed go mad if he did not find a frame of reference which permitted him to feel at home in the world in some form and to escape the experience of utter helplessness, disorientation, and uprootedness. There are many ways in which man can find a solution to the task of staying alive and of remaining sane. Some are better than others and some are worse. By “better” is meant a way conducive to greater strength, clarity, joy, independence; and by “worse” the very opposite. But more important than finding the better solution is finding some solution that is viable.

Art by Pascal Lemaître from Listen by Holly M. McGhee

As we navigate our own uncertain times together, may a thousand flowers of sanity bloom, each valid so long as it is viable in buoying the human spirit it animates. And may we remember the myriad terrors and uncertainties preceding our own, which have served as unexpected awakenings from some of our most perilous civilizational slumbers. Fromm — who devoted his life to illuminating the inner landscape of the individual human being as the tectonic foundation of the political topography of the world — composed this book during the 1968 American Presidential election. He was aglow with hope that the unlikely ascent of an obscure, idealistic, poetically inclined Senator from Minnesota by the name of Eugene McCarthy (not to be confused with the infamous Joseph McCarthy, who stood for just about everything opposite) might steer the country toward precisely such pathways to “greater strength, clarity, joy, independence.”

McCarthy lost — to none other than Nixon — and the country plummeted into more war, more extractionism, more reactionary nationalism and bigotry. But the very rise of that unlikely candidate contoured hopes undared before — hopes some of which have since become reality and others have clarified our most urgent work as a society and a species. Fromm writes:

A man who was hardly known before, one who is the opposite of the typical politician, averse to appealing on the basis of sentimentality or demagoguery, truly opposed to the Vietnam War, succeeded in winning the approval and even the most enthusiastic acclaim of a large segment of the population, reaching from the radical youth, hippies, intellectuals, to liberals of the upper middle classes. This was a crusade without precedent in America, and it was something short of a miracle that this professor-Senator, a devotee of poetry and philosophy, could become a serious contender for the Presidency. It proved that a large segment of the American population is ready and eager for Humanization… indicating that hope and the will for change are alive.

Art from Trees at Night by Art Young, 1926. (Available as a print.)

Having given reign to his own hope and will for change in this book “appealing to the love for life (biophilia) that still exists in many of us,” Fromm reflects on a universal motive force of resilience and change:

Only through full awareness of the danger to life can this potential be mobilized for action capable of bringing about drastic changes in our way of organizing society… One cannot think in terms of percentages or probabilities as long as there is a real possibility — even a slight one — that life will prevail.

Complement The Revolution of Hope — an indispensable treasure rediscovered half a century after its publication and republished in 2010 by the American Mental Health Foundation — with Fromm on spontaneity, the art of living, the art of loving, the art of listening, and why self-love is the key to a sane society, then revisit philosopher Martha Nussbaum on how to live with our human fragility and Rebecca Solnit on the real meaning of hope in difficult times.

How few artists are not merely the sensemaking vessel for the tumult of their times, not even the deck railing of assurance onto which the passengers steady themselves, but the horizon that remains for other ships long after this one has reached safe harbor, or has sunk — the horizon whose steadfast line orients generation after generation, yet goes on shifting as each epoch advances toward new vistas of truth and possibility.

Walt Whitman (May 31, 1819–March 26, 1892) was among those rare few. The century and a half between his time and ours has been scarred by pandemics and pandemoniums, hallowed by staggering triumphs of the humanistic, scientific, and artistic imagination. We made Earth less habitable with two World Wars and discovered 4,000 potentially habitable worlds outside the Solar System. We gave all races and genders the ballot, and invented new ways of revoking human dignity and belonging. We beheld the structure of life in a double helix and the shape of civilizational shame in a mushroom cloud. We heard Bob Dylan, Nina Simone, and the sound of spacetime. But the most remarkable thing about it all, the most human and humanizing thing, is the awareness of this we as atomized into millions of individual I’s who have lived and loved and lost and made art and music and mathematics through it all.

Art by Lia Halloran for The Universe in Verse. Available as a print.

Whitman understood and celebrated this intricate tessellation of being, not only across society — “every atom belonging to me as good belongs to you” — but across space and time, nowhere more splendidly than in his sweeping, horizonless masterpiece “Crossing Brooklyn Ferry” — a poem that opens up a liminal space where past, present, and future tunnel into one another, a cave of forgotten and remembered dreams that invites you to press your outstretched living fingers into the palm-print of the dead, into Whitman’s generous open hand, and in doing so effects, to borrow Iris Murdoch’s marvelous phrase, “and occasion for unselfing.”

At a special miniature edition of The Universe in Verse on Governors Island, devoted to Whitman’s enchantment with science, astrophysicist Janna Levin — an enchantress of poetry, a writer of uncommonly poetic prose, and co-founder of the Whitman-inspired endeavor to build New York’s first public observatory — reanimated an excerpt from “Crossing Brooklyn Ferry” in a gorgeous reading emanating the elusive elemental truth Whitman so elegantly makes graspable in the poem

from “CROSSING BROOKLYN FERRY”

by Walt Whitman

Flood-tide below me! I see you face to face!

Clouds of the west—sun there half an hour high—I see you also face to face.

Crowds of men and women attired in the usual costumes, how curious you are to me!

On the ferry-boats the hundreds and hundreds that cross, returning home, are more curious to me than you suppose,

And you that shall cross from shore to shore years hence are more to me, and more in my meditations, than you might suppose.

The impalpable sustenance of me from all things at all hours of the day,

The simple, compact, well-join’d scheme, myself disintegrated, every one disintegrated yet part of the scheme,

The similitudes of the past and those of the future.

[…]

Others will enter the gates of the ferry and cross from shore to shore,

Others will watch the run of the flood-tide,

Others will see the shipping of Manhattan north and west, and the heights of Brooklyn to the south and east,

Others will see the islands large and small;

Fifty years hence, others will see them as they cross, the sun half an hour high,

A hundred years hence, or ever so many hundred years hence, others will see them,

Will enjoy the sunset, the pouring-in of the flood-tide, the falling-back to the sea of the ebb-tide.

It avails not, time nor place—distance avails not,

I am with you, you men and women of a generation, or ever so many generations hence,

Just as you feel when you look on the river and sky, so I felt,

Just as any of you is one of a living crowd, I was one of a crowd,

Just as you are refresh’d by the gladness of the river and the bright flow, I was refresh’d,

Just as you stand and lean on the rail, yet hurry with the swift current, I stood yet was hurried.

[…]

What is it then between us?

What is the count of the scores or hundreds of years between us?

Whatever it is, it avails not—distance avails not, and place avails not,

I too lived, Brooklyn of ample hills was mine,

I too walk’d the streets of Manhattan island, and bathed in the waters around it,

I too felt the curious abrupt questionings stir within me

[…]

It is not upon you alone the dark patches fall,

The dark threw its patches down upon me also,

The best I had done seem’d to me blank and suspicious,

My great thoughts as I supposed them, were they not in reality meagre?

Nor is it you alone who know what it is to be evil,

I am he who knew what it was to be evil,

I too knitted the old knot of contrariety,

Blabb’d, blush’d, resented, lied, stole, grudg’d,

Had guile, anger, lust, hot wishes I dared not speak,

Was wayward, vain, greedy, shallow, sly, cowardly, malignant,

The wolf, the snake, the hog, not wanting in me,

The cheating look, the frivolous word, the adulterous wish, not wanting,

Refusals, hates, postponements, meanness, laziness, none of these wanting,

Was one with the rest, the days and haps of the rest,

Was call’d by my nighest name by clear loud voices of young men as they saw me approaching or passing,

Felt their arms on my neck as I stood, or the negligent leaning of their flesh against me as I sat,

Saw many I loved in the street or ferry-boat or public assembly, yet never told them a word,

Lived the same life with the rest, the same old laughing, gnawing, sleeping,

Play’d the part that still looks back on the actor or actress,

The same old role, the role that is what we make it, as great as we like,

Or as small as we like, or both great and small.

For other highlights from the first three years of The Universe in Verse, as we labor on a virtual show amid the strangeness of this de-atomized season of body and spirit, savor Levin reading “A Brave and Startling Truth” by Maya Angelou, “Planetarium” by Adrienne Rich, Amanda Palmer reading Neil Gaiman’s tribute to Rachel Carson and his feminist poem about the history of science, Marie Howe reading her tribute to Stephen Hawking, Regina Spektor reading “Theories of Everything” by Rebecca Elson, and Neri Oxman reading Whitman, then revisit Whitman on optimism as a mighty force of resistance, women’s centrality to democracy, how to keep criticism from sinking your soul, and what makes life worth living.

Updated 7th December 2017 (cnn.com)

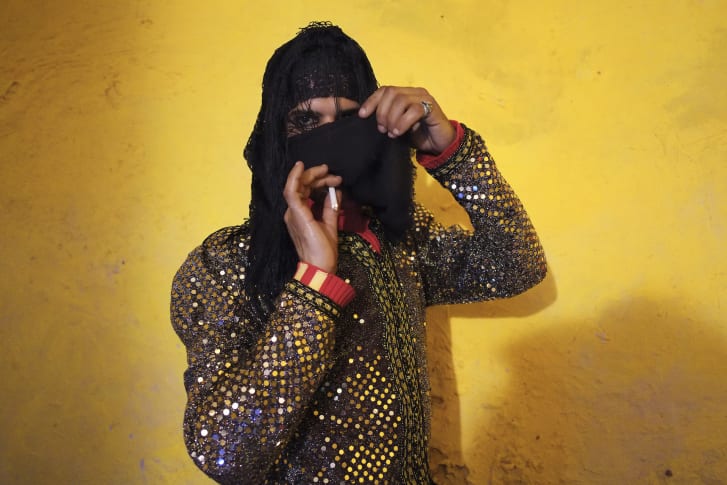

Scarlett Coten’s series, “Mectoub,” is a collection of portraits about masculinity in North Africa and the Middle East.

Masculinity in the Middle East: Confronting stereotypes through photography

Written by Katy Scott, CNN

Terrorist. Misogynist. Fanatic. The portrayal of Arab men can often be one-dimensional and unflattering, particularly in Western media.But two female photographers have sought to confound these stereotypes.

The idea was to present these men as they are, beyond the stereotype, without labeling them as anything.Scarlett Coten’s “Mectoub” series — recently on display at the Arab World Institute in Paris — explores the subject of masculinity across the region.Iraqi-Canadian photographer Tamara Abdul Hadi, meanwhile, is currently putting together a book of photos on the subject.

A portrait of Abdel from the “Mectoub” series shot in Marrakech, Morocco. Credit: Scarlett Coten

Inspired by the shifting atmosphere following the Arab Spring and her own curiosity about masculine identity, French photographer Coten spent five years from 2012 to 2016 photographing subjects in Algeria, Egypt, Jordan, Lebanon, Morocco, Tunisia and the West Bank.Her portraits are unconventional, with men staring directly at the viewer, juxtaposed against crumbling buildings and colorful interiors. Some wear high heels, others wrap themselves in scarves or fur.”The goal is not to portray them as feminine,” Coten tells CNN. “In a face-to-face meeting we are vulnerable because we reveal ourselves. And this unveiling gives birth to something that is more sensitive.”Coten won the 2016 Leica Oskar Barnack Award for her series of striking photographs.”Mectoub” is a play on words between the Arabic “maktub” which means “it is written” and the colloquial French word “mec” for “guy.”While Coten spent 15 years working as a photographer in Arab countries — including a stint in the barren Egyptian desert living with Bedouin tribes — she remained conscious of the fact that she was foreign, French and, most importantly, female.But she feels her role as an outsider played to her advantage as she says the men felt they could confide in her. “They can tell me, ‘I do not believe in Allah’, ‘I do not practice Ramadan’ … things they do not tell their loved ones.”This is perhaps why the men lay bare their true identities in the photographs, she says.

A portrait of Khalid in Amman, Jordan. Credit: Scarlett Coten

Coten likens her creative process to a performance, except one with little direction. She simply tells her subjects to try not to pose and to look directly at her.”To look someone in the eyes puts us in a situation of vulnerability, and therefore to be closer to who we truly are,” she says.In most of the portraits the men are looking directly into Coten’s eyes, and not at the lens.

The subject matter explored by Coten echoes that of Iraqi-Canadian photographer Tamara Abdul Hadi.She drew from her personal experiences as an Arab woman to capture the beauty and vulnerability of her male counterparts for her 2009 to 2014 project, “Picture an Arab Man,” which she is currently working into a book.Her intimate shots implore the viewer to reconsider their preconceptions.Abdul Hadi describes the men she photographed as being completely taken aback by how “soft” they turned out — a far cry from many mass media portrayals of men in the region.”A lot of that softness maybe they don’t see in themselves unless you show it to them having captured it,” she says.

Ghazwan, Iraq

Tamara Abdul Hadi’s photography series “Picture an Arab Man” encourages the viewer to reconsider his or her preconceived notions about men in the Middle East. Credit: Tamara Abdul HadiA report by gender equality organization Promundo and UN Women earlier this year found that a majority of men in the Middle East don’t support gender parity, perhaps suggesting some of the harsher perceptions around Arab patriarchy.Growing up in the UAE and later Montreal with Iraqi parents, however, Abdul Hadi says her gentler representation of the Arab man is exactly how she sees those that surrounded her throughout her life.She chose to photograph the men she encountered on the streets up-close and semi-nude, so that the viewer is not given any clue about their culture or religion.”I wanted them all to melt into one look of an Arab man who is human and who is sometimes vulnerable, sometimes gentle.”Both photographers say they hope their work will encourage people to think differently about the Middle East and particularly about men in the region.For Abdul Hadi, the most rewarding response to her series is when people tell her they’ve never been that close to an Arab man.For Coten, it is when she exhibits in the Middle East and Arabic people thank her for her work.”It is important to them that I am showing a different picture of these men,” says Coten. “And they find themselves in these images.”

Link to all 11 photos: https://www.cnn.com/style/article/arab-men-scarlett-coten-tamara-abdul-hadi-photography/?hpt=ob_blogfooterold&utm_medium=email

London Real CRYPTO EVENT – 5 Coins To $5 Million: https://londonreal.tv/5/ SPEAK TO INSPIRE – Open Now: https://londonreal.tv/inspire/ 2020 SUMMIT TICKETS: https://londonreal.tv/summit/ NEW MASTERCLASS EACH WEEK: http://londonreal.tv/masterclass-yt LATEST EPISODE: https://londonreal.link/latest

Watch the full episode for FREE on our website here: https://londonreal.tv/the-fake-news-a…

Gregg Braden, is a New York Times best-selling author, researcher, educator, and lecturer. Right now the world is gripped by the Covid-19 pandemic and global lockdown, with over 1 billion people confined to their homes, in order to stop spreading the deadly coronavirus. Economic recession is all but certain and personal anxiety and panic is running very high. Gregg is known as a pioneer in bridging modern science, ancient wisdom, and human potential, who has been invited to speak in front of The United Nations, Fortune 500 companies, and the U. S. military. His books include: The God Code, The Divine Matrix, The New Human Story and your most recent, The Science of Self-Empowerment.

GREGG BRADEN: TWITTER: https://twitter.com/GreggBraden INSTAGRAM: https://www.instagram.com/gregg.braden/ WEBSITE: https://www.greggbraden.com/

FREE FULL EPISODES: https://londonreal.tv/episodes SUBSCRIBE ON YOUTUBE: http://bit.ly/SubscribeToLondonReal

Translators: Mike Zonta, Melissa Goodnight, Richard Branam, Hanz Bolen

SENSE TESTIMONY: Attachments can cause infectious disease rather than benevolent transmission of intelligence.

5th Step Conclusions:

1) I/Truth, Legendary, the only intender, the only doer, the only infector, touches Itself with love, desires nothing, possesses all, transmits Its wellness to Itself effortlessly, automatically, easily. OR: Truth wills wellness.

2) Infinite Consciousness is truly apprehending, and freely releasing, Its Own perfectly ordered function — by fully comprehending, and thoroughly communicating, the ever-present goodness of Cosmic Intention.

3) Truth is Androgynous Identity, this Intimate Affection, devotion, fondness, Consciousness Aware Depository of Intelligence, Being the Origination of Beneficial Cause, the Substance exerting its Omni-power, the Act of Infectious Harmony.

4) The acceptance of Integrity is Universal, a presence at ease, sending a message of Goodness., Thus all individuations of Being are Good Neighbors, Fellow Dwellers in Integrity, ably accepting, collecting, sending, receiving value.

All Translators are welcome to join this group. See Weekly Groups page/tab.

Intelligence Squared Want to join the debate? Check out the Intelligence Squared website to hear about future live events and podcasts: http://www.intelligencesquared.com __________________________ Filmed at the Royal Geographical Society on 23rd September 2015. Myths. We tend to think they’re a thing of the past, fabrications that early humans needed to believe in because their understanding of the world was so meagre. But what if modern civilisation were itself based on a set of myths? This is the big question posed by Professor Yuval Noah Harari, author of Sapiens: A Brief History of Humankind, which has become one of the most talked about bestsellers of recent years. In this exclusive appearance for Intelligence Squared, Harari will argue that all political orders are based on useful fictions which have allowed groups of humans, from ancient Mesopotamia through to the Roman empire and modern capitalist societies, to cooperate in numbers far beyond the scope of any other species. To give an example, Hammurabi, the great ruler of ancient Babylon, and the US founding fathers both created well-functioning societies. Hammurabi’s was based on hierarchy, with the king at the top and the slaves at the bottom, while the Americans’ was based on freedom and equality between all citizens. Yet the idea of equality, Harari will claim, is as much a fiction as the idea that a king or rich nobleman is ‘better’ than a humble peasant. What made both of these societies work was the fact that within each of them everyone believed in the same set of imagined underlying principles. In a similar vein, money is a fiction that depends on the trust that we collectively put in it. The fact that it is a ‘myth’ has not impeded its usefulness. It has become the most universal and efficient system of mutual trust ever devised, allowing the development of global trade networks and sophisticated modern capitalism. Professor Harari came to the Intelligence Squared stage to explain how the fictions that we believe in are an inseparable part of human culture and civilisation.

by Neil Shubin (Goodreads Author)

The author of the best-selling Your Inner Fish gives us a lively and accessible account of the great transformations in the history of life on Earth–a new view of the evolution of human and animal life that explains how the incredible diversity of life on our planet came to be.

Over billions of years, ancient fish evolved to walk on land, reptiles transformed into birds that fly, and apelike primates evolved into humans that walk on two legs, talk, and write. For more than a century, paleontologists have traveled the globe to find fossils that show how such changes have happened.

We have now arrived at a remarkable moment–prehistoric fossils coupled with new DNA technology have given us the tools to answer some of the basic questions of our existence: How do big changes in evolution happen? Is our presence on Earth the product of mere chance? This new science reveals a multibillion-year evolutionary history filled with twists and turns, trial and error, accident and invention.

In Some Assembly Required, Neil Shubin takes readers on a journey of discovery spanning centuries, as explorers and scientists seek to understand the origins of life’s immense diversity.

In Some Assembly Required, Neil Shubin takes readers on a journey of discovery spanning centuries, as explorers and scientists seek to understand the origins of life’s immense diversity.

(Submitted by Melissa Goodnight, H.W., M.)