- By Troy Wolverton | Examiner staff writer

- Mar 15, 2026 (SFExaminer.com)

The negative effects of artificial intelligence — environmental degradation, its use in war — are an inevitable result of its creators’ guiding philosophy, according to a new documentary.

In her new film, “Ghost in the Machine,” documentarian Valerie Veatch makes the case that such downsides are a natural consequence of the ideology that’s driven the technology’s development and how its developers have viewed the world, one of white supremacy and dehumanization.

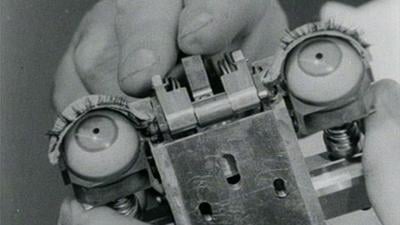

In the work, which debuted at the Sundance Film Festival in January, she advances the proposition that the idea of general intelligence and the statistical tools used to attempt to measure it — which are foundational both to the idea of creating a “human-level” artificial general intelligence and the statistical models underlying the technology — came out of eugenics.

And she asserts that many of the key figures in the history of AI’s development — including William Shockley, who essentially founded Silicon Valley, and John McCarthy, who coined the term “artificial intelligence” — were either directly tied to eugenicists or openly expressed racist or misogynistic ideas.

Veatch, whose previous works looked critically at social media, said she didn’t set out to make a negative film about AI. Instead, she said, she followed her research, which included digging into archives and interviewing dozens of experts — many of whom have written critically of the technology.

In advance of the film’s online screening March 27, on AI Literacy Day, The Examiner spoke with Veatch about the work, her views on AI, and her choice to intersperse real and AI-generated footage within the film. The following conversation has been edited for length and clarity.

Why make a film about AI and its dangers? October 2024, my friend signed me up for the Sora early access program for OpenAI, where we were testing this top-secret technology that was meant to revolutionize the film industry. Playing around with [it, it created] highly sexist and racist videos [unbidden]. I was like, “Wow, what is this crazy technology?” As a female filmmaker and a feminist, all of my critical-theory bells started ringing.

The kind of feedback I got was very much like, “Oh, it’s kind of cringe to be asking these questions,” or, “There’s really nothing that we can do. All AI functions on bias, and you’re being silly talking about this.”

And so I just started researching and looking around. One of the first papers I came across was Abeba Birhane’s paper on how misogyny makes its way into datasets.

I started cold emailing various folks who’d written papers that I thought were really interesting. I ended up talking to like 40 of the most amazing, wonderful academics and philosophers and linguists and sociologists. By the end of it, I was so amazed to see that a film had formed out of these conversations, and that everybody is telling the story altogether, like a chorus.

It sounds like you went into it with a somewhat critical eye, especially after your Sora experience. To what extent do you think that influenced who you talked to and the perspectives that are told in the film? It’s interesting that you say that, because I really wanted this technology to be this liberatory, emergent vessel for all of human knowledge. All of the rhetoric around LLMs was something I really wanted to be true. I didn’t want it to be the story it ended up being.

From where I’m sitting, there isn’t a way that you can look at this technology and walk away being like, “Well, there’s actually another side to this,” or like, “Let’s take a more balanced view,” because this is a technology that is rotten from the core. It is extractive. It is [exploitative]. It relies on colonial logics and the logics of eugenics and race science.

One of the interesting visual choices you made was to go back and forth between AI-generated imagery and real imagery. You have these consistent labels of “AI” and “Not AI” you use in the film. What was your thinking with that? Well, it was a provocation. I initially was like, obviously, don’t want to touch the stuff. AI is terrible. It looks awful. It gives you this ick feeling watching it. And especially after my experience with OpenAI, I was like, “Gross!” But there’s a tradition in feminist video art of turning the aesthetic of the oppressor upon itself.

I kept this footage in the film — some of it is made with Sora, and some of it is generated with Adobe’s Firefly — because I really wanted somebody to be like, “Hey, you can’t actually do this,” because it just feels confrontational in a way that makes me happy.

Sure enough, with PBS, because they actually have standards and practices, they’re like, you can’t broadcast Sora footage, which I’m so relieved to hear. So I’m doing a reedit of the film, and one of the things is just taking out all of that Sora footage. I’m happy to do it.

There’s this immense effort on the part of big tech companies to make generative-AI technologies feel like they’re the next step in a natural progression of digital storytelling. Like, we had non-linear editing, and we had digital color correcting, and now we have [this]. But this is a fundamentally different technology that is the theft of authorship on a fundamental level.

One of the places I thought that worked well, flipping back and forth between AI and not AI, was this clip I had never seen before where Mark Zuckerberg is in an Elvis outfit and dancing on a piano. I had to actually look to see whether that was AI or not. I know. As the main characters of the film become increasingly untethered from the reality of the technologies they’re unleashing on the world and their behavior becomes more absurd, the things they’re saying become more absurd, your eye begins to search for, like, “Surely, this is AI.” And “Not AI” exists as a confirmation that, yes, this dystopia is real. It’s happening.

In the film you go into many of the downsides of AI technology. What do you see as the technology’s biggest or fundamental flaw? The dehumanization that occurs because of the rhetoric of AI. Like when my six year old comes home and she says her classmate said that he doesn’t need to learn anything because AI is just going to do it — it’s that lie of super intelligence and the way that it seeps into the psyche of our children and our communities that dehumanizes us, disconnects us from the truth of living.

There’s a clip I’m adding into the film, now that I’m re-editing it, of Sam Altman talking about how everyone loves to complain about the environmental crisis and the environmental cost of these systems, but [they should] look at how much resources it’s taken a human to get to the age of 20 and be as smart as they are or how many resources humanity has taken up to evolve to being this high in our IQ level now.

So where does that go, Sam? Do you say, OK, are five median IQ folks worth eight gallons of water? On what matrix of resource allocation are you entering this information? Why are you even framing it like this? And it’s that framing that is the problem.

Do you use any AI tools? No, I do not use AI at all for anything. I send long, passionate emails to my children’s school if I even hear the word AI mentioned. I am like, “Correct your framing. Let’s talk about ‘algorithmic systems processing data’ or nothing at all.”

I would actually want everyone to stop using the phrase “artificial intelligence.” As we see in the film, it’s just an invented term for some guy’s weird side project to create human-level intelligence. And [his] whole idea of what that would look like was a computer system playing chess, a bounded set of rules. AI is not a real thing, and I’m exhausted by how it feels like a losing battle every day.

Do you see any positive uses for AI? No. There are technologies that would be called AI, but back in the day, we just called it spell check. Referencing a bounded set of data points that are in the dictionary and comparing them to the word you’ve written? Sure, that’s great.

Or, the weather, right? That’s a kind of interesting use of algorithmic processing of data points — “typically it is 70 degrees nine out of 10 days of this year.” Those are grounded datasets you can process algorithmically.

But when we get into the framing of chatbot products as if they are these bastions of neutral knowledge, that’s where it starts to fall apart. So I think there are no good uses of AI, because AI is a problematic concept, and it’s an idea that dehumanizes us.

If you have a tip about tech, startups or the venture industry, contact Troy Wolverton at twolverton@sfexaminer.com or via text or Signal at (415) 515-5594.